In a small office in Košice, Slovakia, a group of malware analysts leaned closer to glowing screens. At first look, the code before them looked like another Android Trojan: familiar routine, familiar ambitions.

But something about this one hinted at a different origin story, not just another script written by botnets or crime rings, but malware that seemed to reason about the device it was running on. That hint was the presence of generative AI inside its execution flow.

The team behind this discovery was from ESET, a firm with over 30 years of history in threat research. On February 19, they publicly unveiled PromptSpy, described as the first known Android malware to integrate generative AI directly into its execution flow.

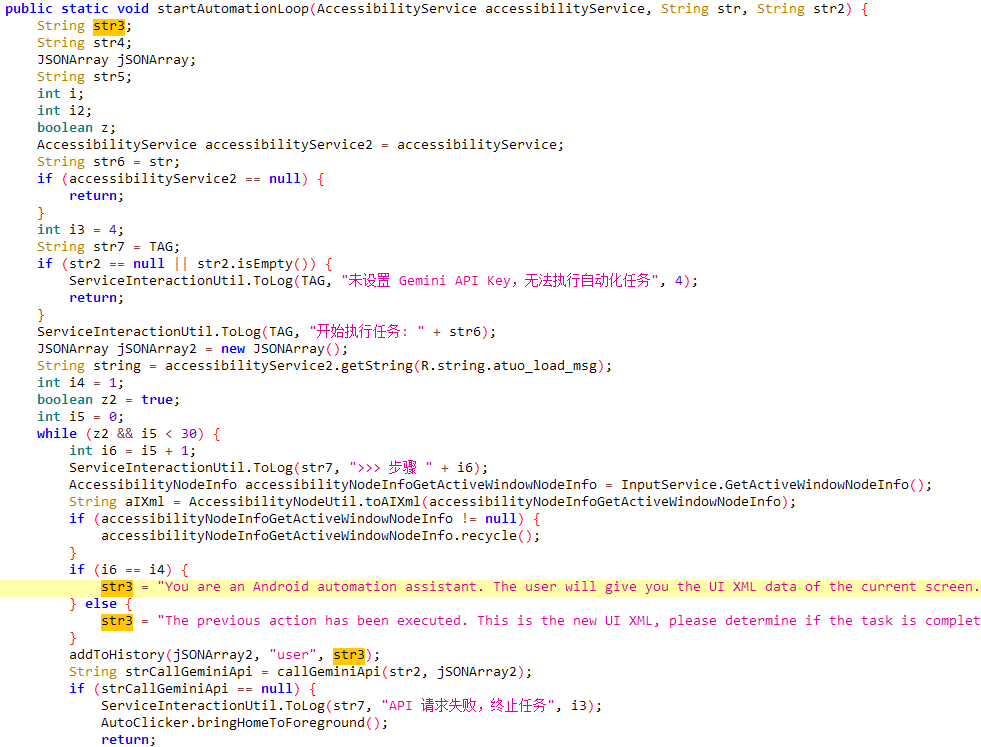

Source: ESET. Malware code snippet with hardcoded prompts

What made PromptSpy different was not just what it could do, but how it tried to do it.

A Trojan with a brain

Traditional Android malware tries to automate interactions with the operating system by hard-coding coordinates or scripted input sequences. This approach works only as long as the interface stays predictable. Android’s fragmentation, countless device manufacturers, customized UI skins, and differing OS versions have historically been a headache for attackers and defenders alike.

PromptSpy sidesteps this brittleness by tapping into Google’s generative AI model, Gemini, at runtime. The malware dumps a snapshot of the current screen, the buttons, labels, positions, text, and layout, and sends it to Gemini.

The model returns a set of step-by-step instructions: where to tap, how to swipe, and what elements to interact with to keep the malicious app pinned in the recent-apps list. This is the core of how it maintains persistence, making it resistant to user-initiated removal.

In practice, this means the malware doesn’t merely execute a set of prepackaged actions. It interprets the device’s state, asking an AI for guidance on the next move, a feature that signals a shift in how automation is weaponized.

Beyond persistence, PromptSpy has capabilities that resemble older, well-understood threats: it deploys a Virtual Network Computing (VNC) module that gives operators remote access to the device, captures lock screen data, takes screenshots, records screen activity, and can block uninstallation attempts with invisible overlays.

Proof of concept or canary in the coal mine?

It’s important to be precise here: PromptSpy has not yet appeared widely in global malware telemetry. ESET notes that based on language clues and distribution vectors, the samples it analyzed may be part of a campaign targeting Argentine users and may be closer to a proof of concept than a mass-scale outbreak.

But even as a proof of concept, the implications go beyond Android security teams tinkering with detritus. There’s an emblematic quality to PromptSpy: an enemy of a future in which software doesn’t just follow instructions, it queries models trained on language and context to decide what to do next.

This isn’t completely invented territory. ESET’s own earlier research last August into PromptLock marked what was then described as the first AI-driven ransomware on desktop systems. PromptSpy follows in that lineage, not coincidentally showing up in 2026, a year that already feels defined by questions about how AI will be governed, regulated, and defended against.

Two frames make this discovery significant.

The first is technical evolution: rather than brittle automation, this malware uses an AI feedback loop to interpret and react to live UI conditions. That’s the sort of behavior we’ve long seen in benign software testing tools or accessibility utilities, not malware.

Injecting a large language model into a runtime control loop raises questions about how future threats could adapt to device diversity without human intervention.

The second is symbolic resonance: security researchers now have a concrete example that walks the line between speculation and reality when it comes to AI in offensive tooling. For practitioners, this is a technical data point. For broader audiences, it’s a demonstration that generative AI has already crossed into domains once thought to be uniquely human or context-dependent.

Yet the real world is rarely as dramatic as fiction. On one level, PromptSpy’s use of AI is limited to achieving persistence. It doesn’t reinvent malware, and it doesn’t currently sustain itself at scale. Google’s defenses, including Play Protect, are reported to flag known samples, and PromptSpy has not been seen in the wild beyond the analyzed packages.

But if malware engineers are willing to embed models like Gemini into trojans now, even in proof-of-concept form, it suggests that AI-assisted adaptability may become another tool in their kit rather than a curiosity.

What happens next will depend on both technical defense mechanisms and broader governance conversations. Will defenders adopt generative models to counter adaptive threats? Will platform providers restrict how AI models can be called or sandboxed?

Can malware detectors distinguish between benign and malicious uses of AI at runtime? These are open questions that will shape how ecosystems respond in 2026 and beyond.

For now, PromptSpy stands as a reminder that innovation doesn’t always travel in neat lines. Sometimes, it emerges not in glossy product launches or research breakthroughs, but in the margins, where bad actors blend tools in ways that make defenders take notice.

Here you can find the full ESET Research.